ACM MM 2026

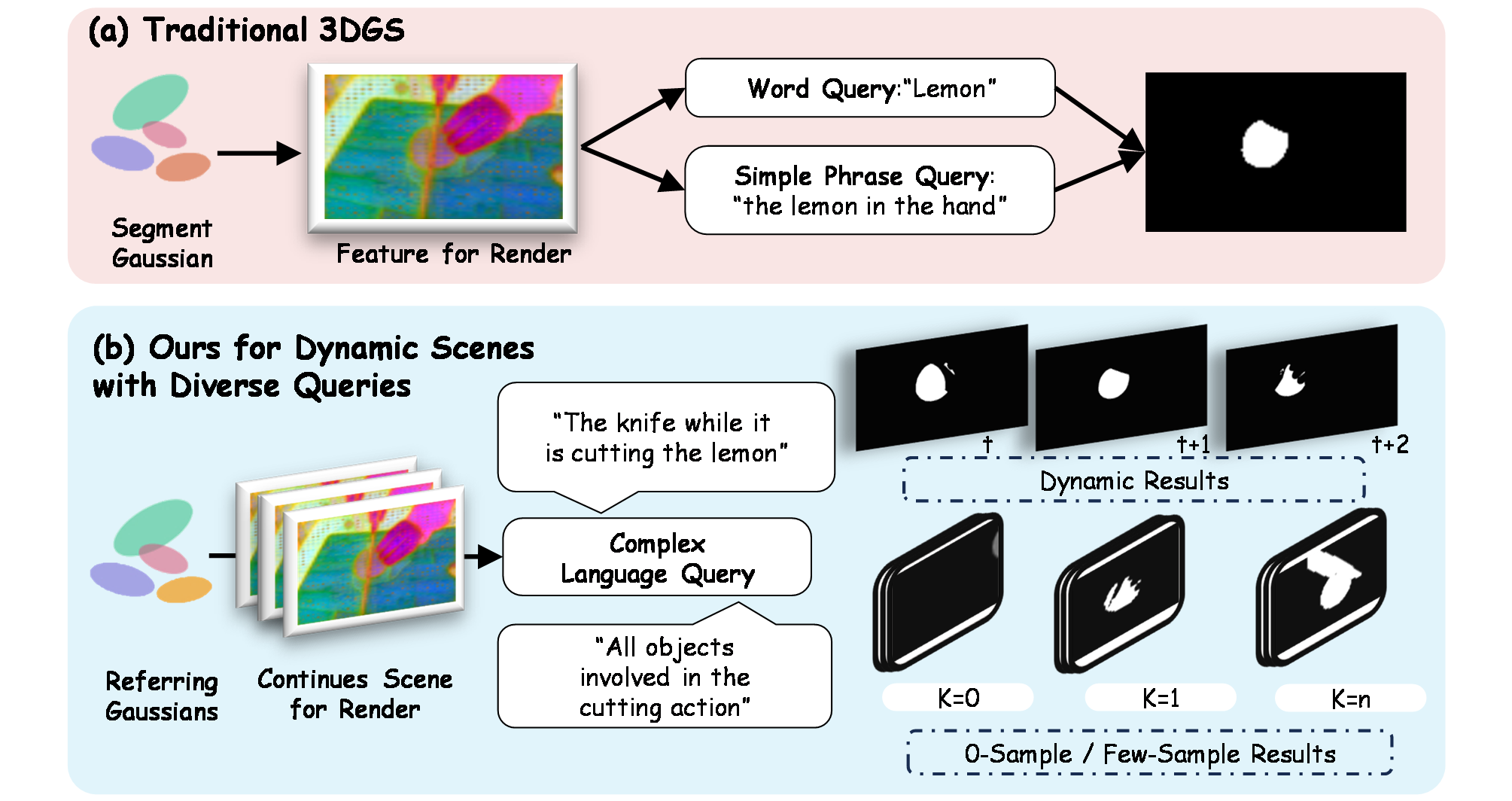

Dynamic 4D Gaussian representations have shown strong capability in reconstruction and novel-view synthesis, yet they remain insufficient for complex natural-language referring understanding in dynamic scenes. Existing 4D Gaussian methods are primarily designed for rendering-oriented dynamic modeling, while current semantic extensions often rely on scene-specific optimization or are evaluated on relatively simple query settings.

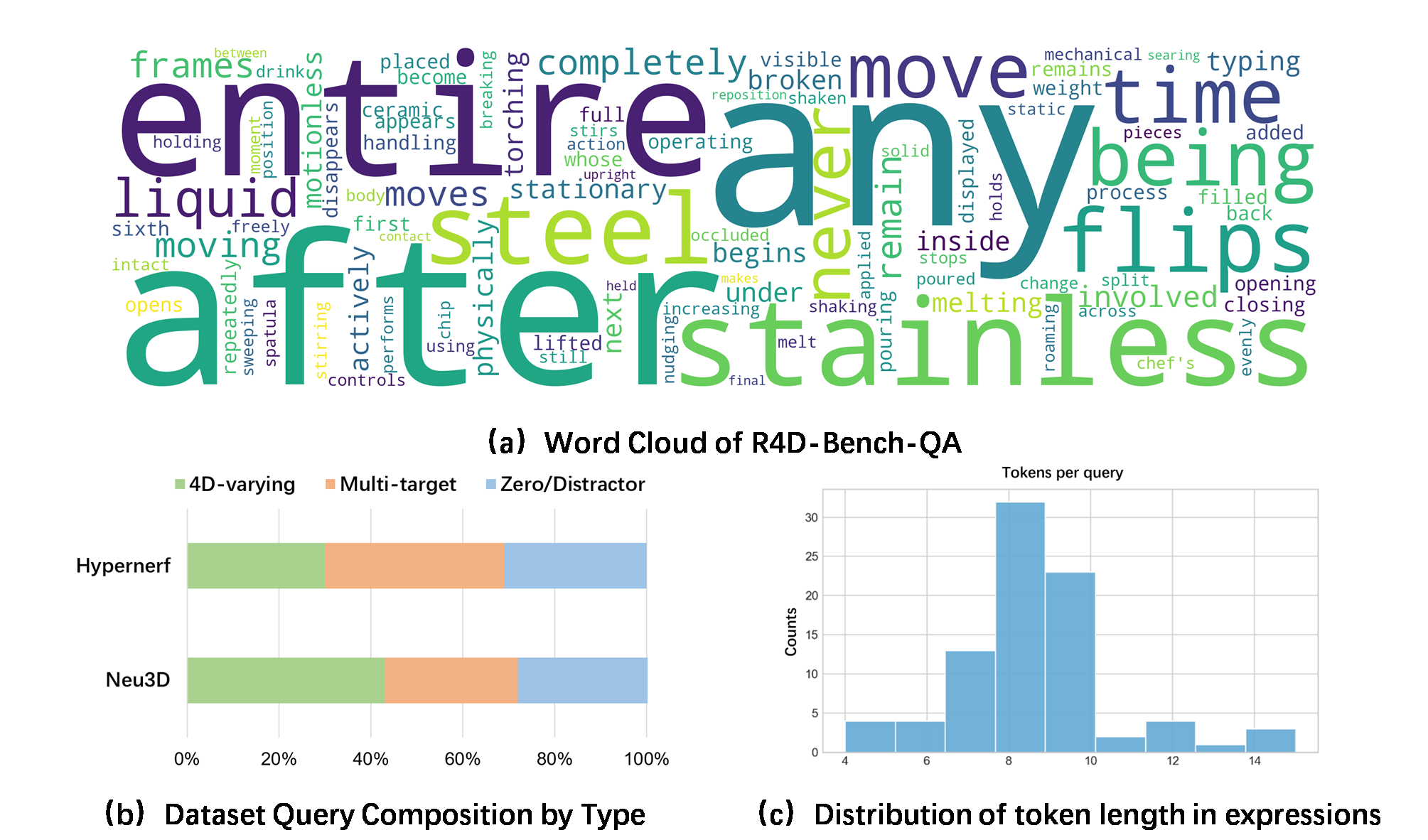

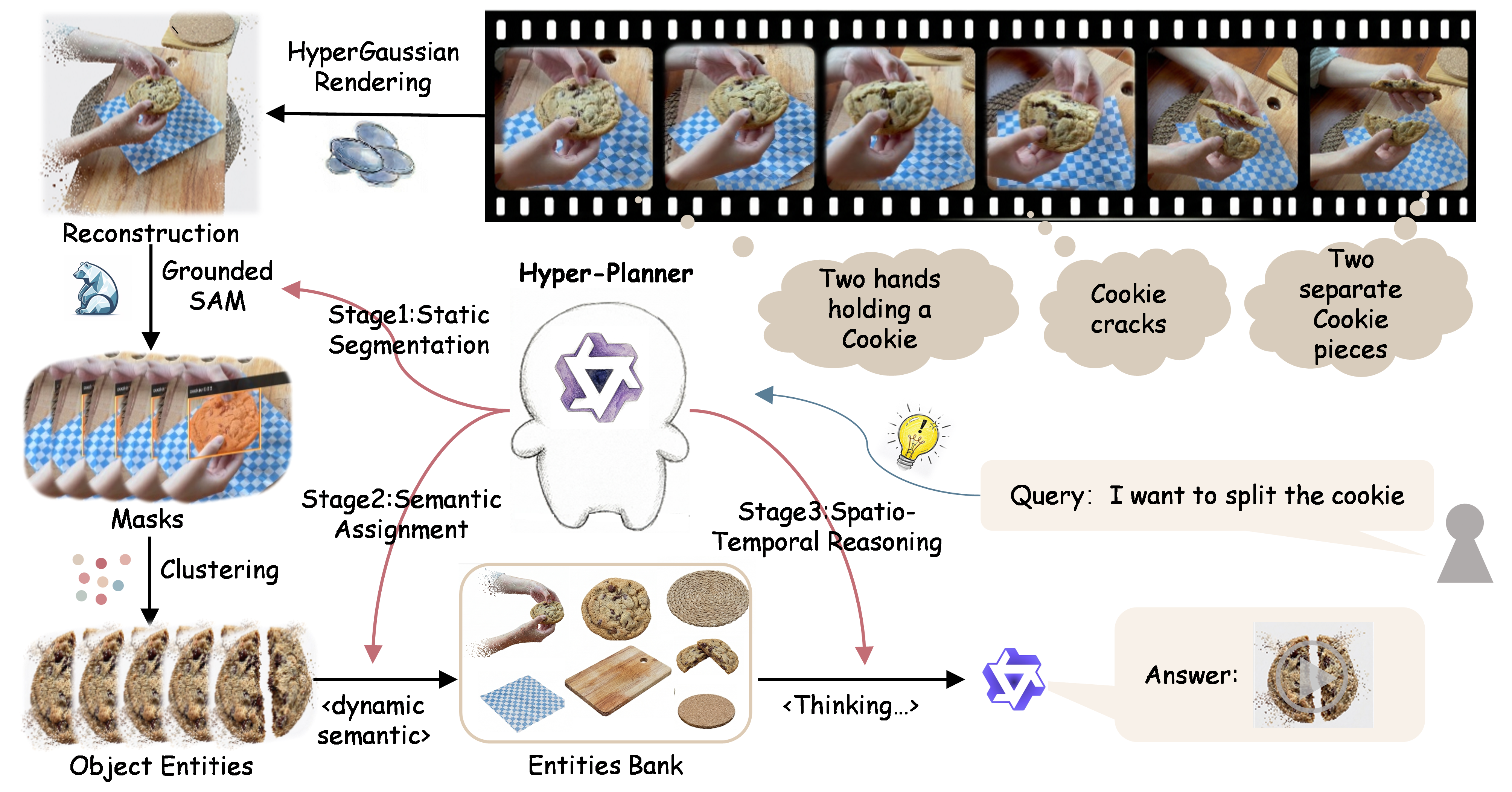

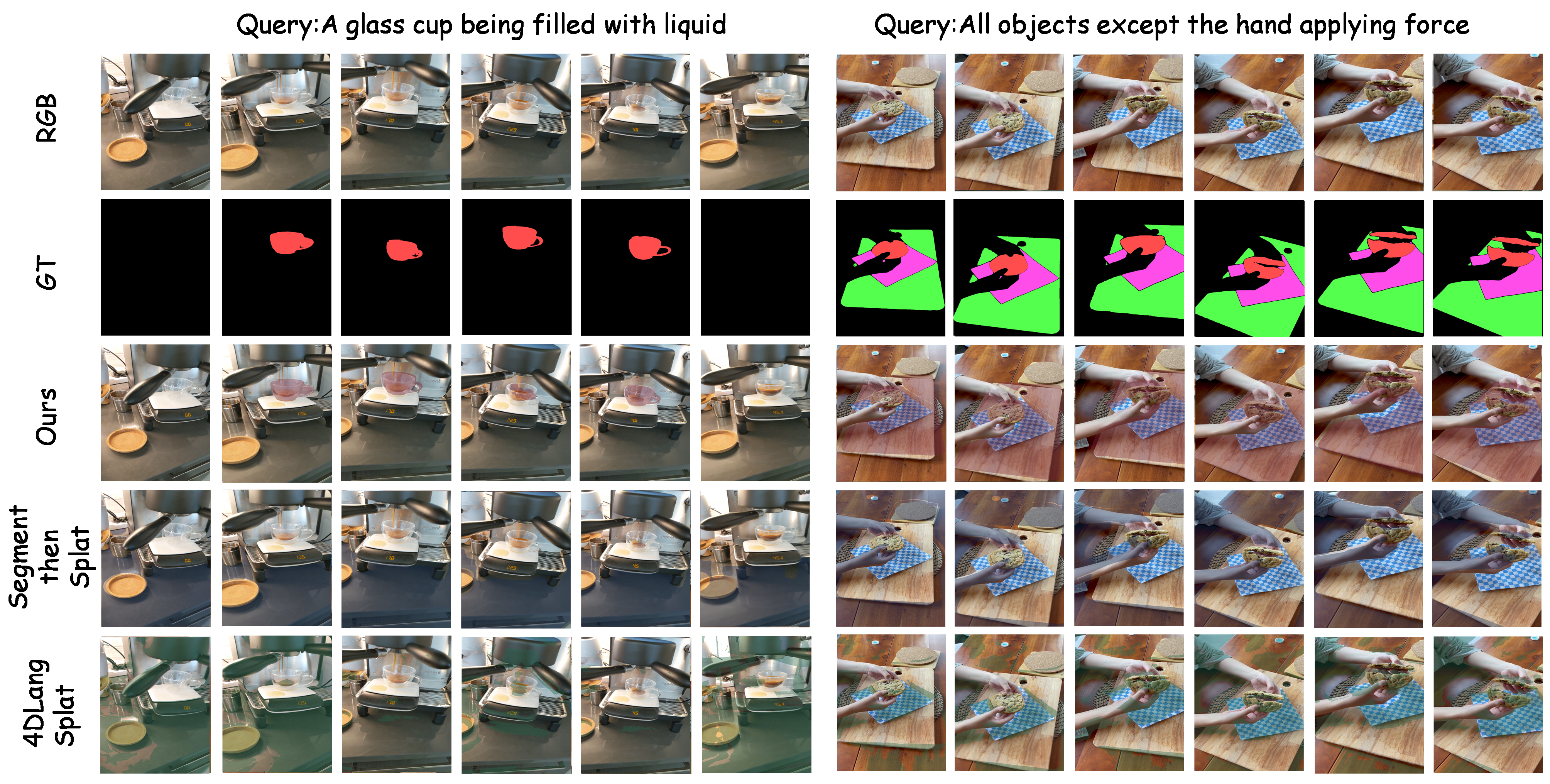

We study Referring 4D Gaussian Splatting (R4DGS), a task that targets realistic 4D referring understanding under temporally varying queries, multi-target and reasoning-intensive queries, and zero-target or distractor queries. To support this task, we introduce R4D-Bench-QA, a benchmark comprising 12 dynamic scenes, 266 structured query annotations, and four query categories. We further present HyperGaussian, a unified framework that couples generalized dynamic Gaussian reconstruction, entity-centric scene structuring via an EntityBank, and training-free referring inference via a Qwen-based Hyper-Planner. HyperGaussian decomposes complex queries into trackable object phrases and spatiotemporal constraints, and performs query-conditioned grounding without retraining the underlying scene representation.

R4D-Bench-QA is the first benchmark for Referring 4D Gaussian Splatting. It covers 12 dynamic scenes with 266 structured query annotations across four categories: snapshot, temporal-state, exclusion, and reasoning queries. Each query is paired with per-frame Gaussian-level ground-truth segmentation masks.

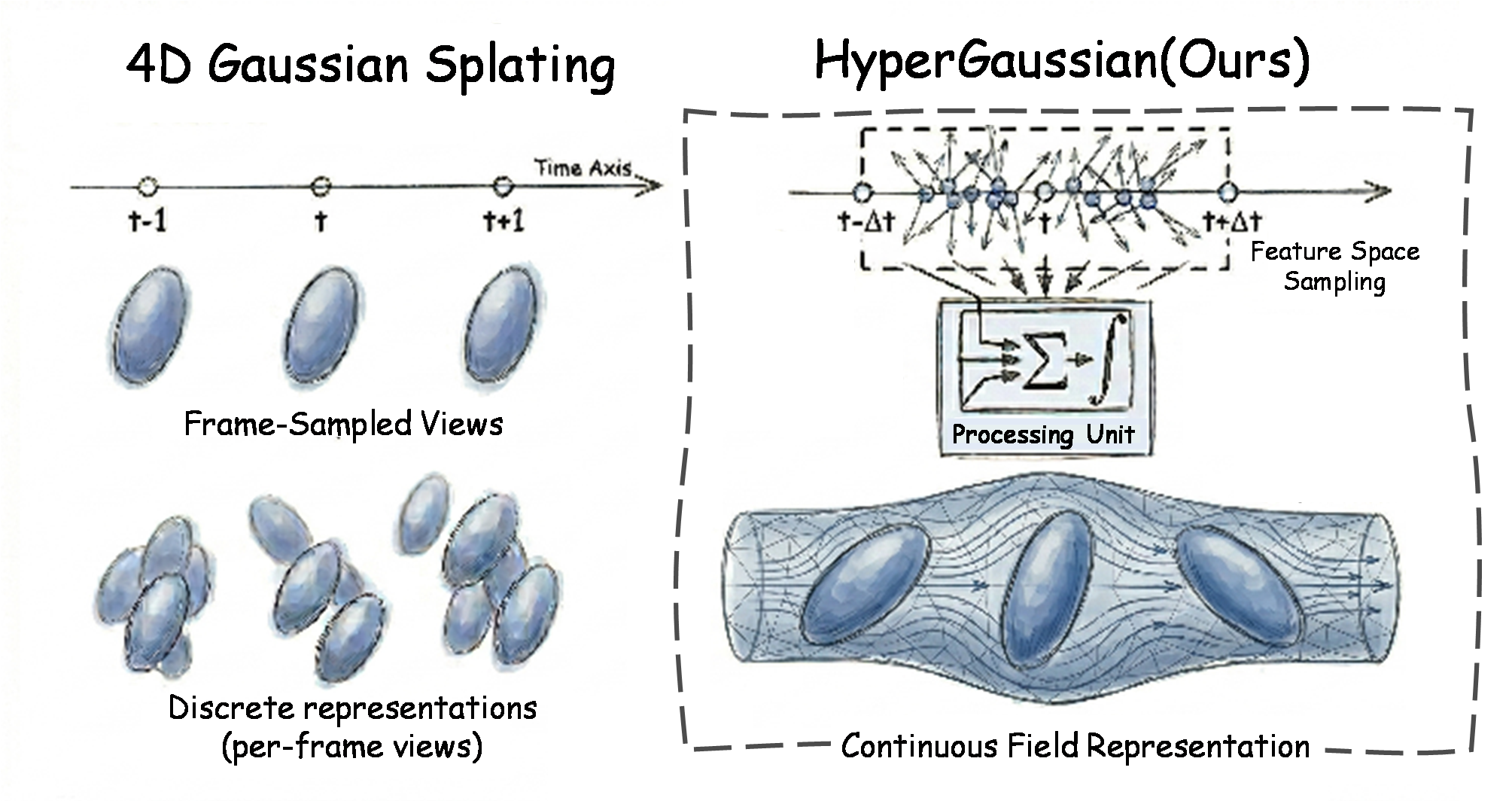

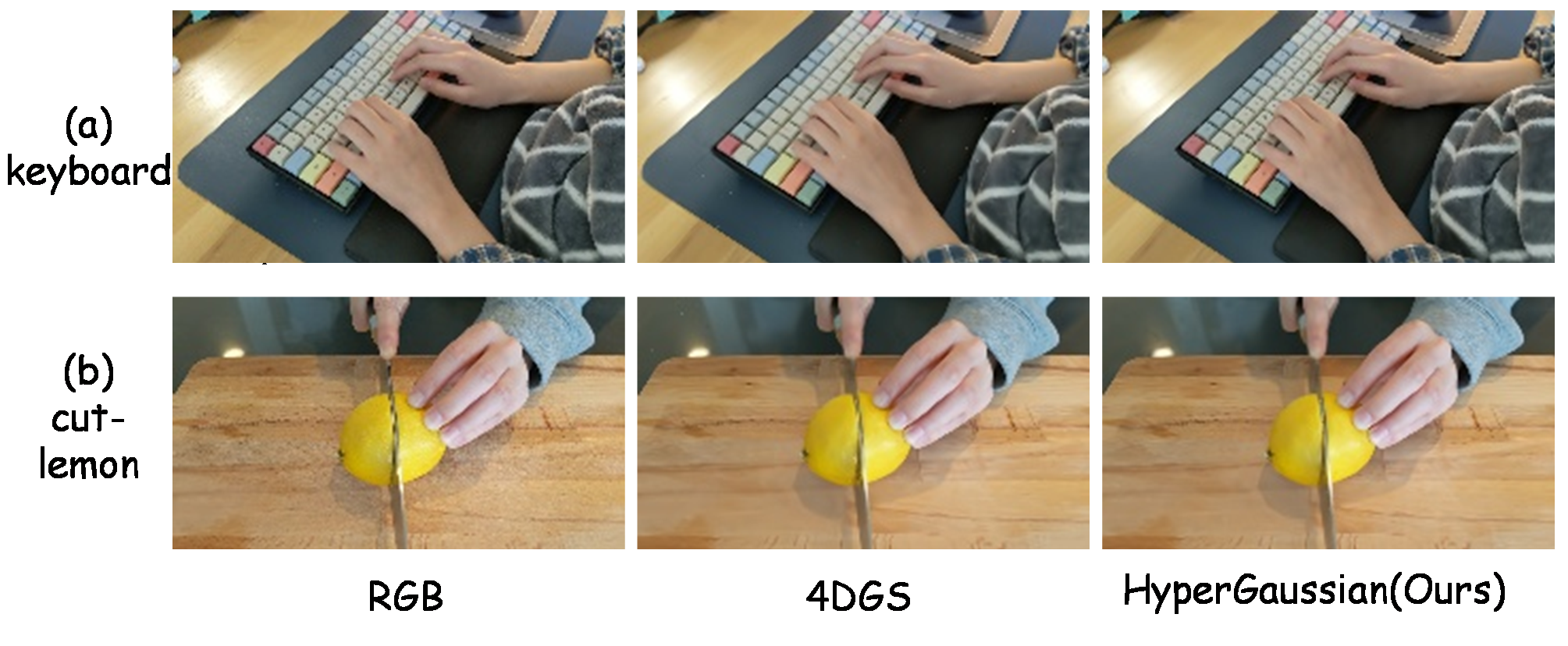

The scene is reconstructed as a set of 4D Gaussians with explicit temporal extent. A learned contextual time warp models deformable motion, producing a compact and differentiable dynamic scene representation that serves as the shared substrate for all downstream semantic stages.

Hyper-Planner (Qwen-based MLLM) — 3-stage referring pipeline

Grounded-SAM2 is applied to reference frames to obtain per-entity masks. Masks are propagated across time via temporal consistency to associate each Gaussian with a persistent entity identity.

Each entity is assigned visual and semantic embeddings from representative crops and geometric priors. The results are stored in the EntityBank — a shared entity-centric scene memory reused across all queries.

The Hyper-Planner decomposes the natural-language query into object phrases, attributes, relations, temporal cues, and cardinality constraints. Candidate entities from the EntityBank are scored and selected without any scene-specific retraining.

Joint evaluation on R4D-Bench-QA (referring + reconstruction)

| Method | Acc ↑ | vIoU ↑ | PSNR ↑ | SSIM ↑ | LPIPS ↓ |

|---|---|---|---|---|---|

| Segment then Splat | 55.6 | 28.4 | 20.3208 | 0.7027 | 0.3971 |

| 4D LangSplat | 58.4 | 32.1 | 20.3208 | 0.7027 | 0.3971 |

| HyperGaussian (Ours) | 76.5 | 34.4 | 20.4159 | 0.7069 | 0.4082 |

Generalization on 4D LangSplat HyperNeRF split (americano, split-cookie, espresso)

| Method | Acc ↑ | vIoU ↑ |

|---|---|---|

| LangSplat | 54.27 | 24.13 |

| Deformable CLIP | 65.01 | 45.37 |

| Non-Status Field | 84.58 | 62.00 |

| 4D LangSplat | 88.86 | 66.14 |

| HyperGaussian (Ours) | 91.62 | 66.48 |

Module ablation on R4D-Bench-QA

| Variant | Acc ↑ | vIoU ↑ |

|---|---|---|

| 4DGS reconstruction (no HyperGS) | 62.9 | 31.5 |

| w/o Stage 1 static segmentation | 48.6 | 17.2 |

| w/o Stage 2 semantic assignment | 62.9 | 29.8 |

| w/o Stage 3 spatio-temporal reasoning | 36.0 | 26.1 |

| HyperGaussian (full) | 76.5 | 34.4 |

@inproceedings{hypergaussian2026,

title = {HyperGaussian: Referring 4D Gaussian Splatting},

author = {HyperGaussian Authors},

booktitle = {Proceedings of the 34th ACM International Conference on Multimedia},

year = {2026}

}

This project builds on 4DGaussians, Grounded-SAM2, and Qwen. The project page template is adapted from GeoThinker and Nerfies.